Continuum Computing Trustworthiness Research Group

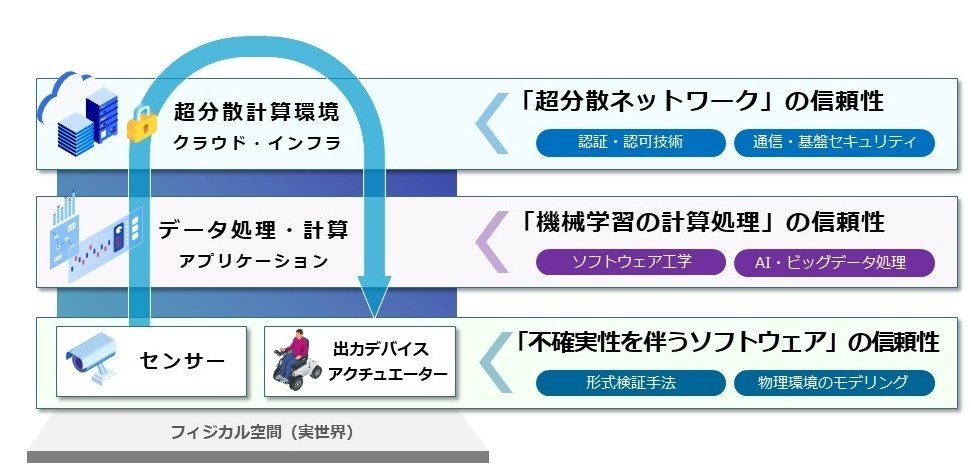

The Continuum Computing Trustworthiness Research Group is working on (1) the development of guidelines for the quality of AI systems and their social implementation; (2) research on the quality evaluation and management for AI systems; (3) research on the software technology to evaluate and improve the trustworthiness of real-world systems with uncertainty; and (4) standardization and social implementations related to digital architecture.

News

Research Topics

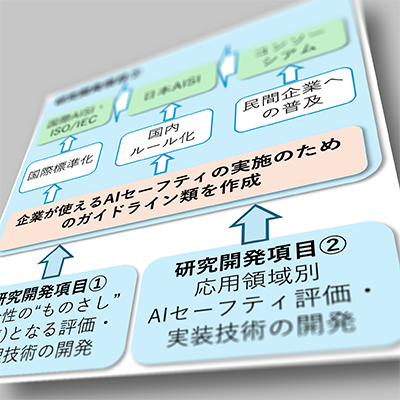

✪ The development of guidelines for the quality of AI systems and their social implementation

We develop the "Machine Learning Quality Management Guideline" to establish quality goals and development processes for products and services using machine learning.

-

Machine Learning Quality Management Guideline(AIST Committee for Machine Learning Quality Management)

- 3rd English Edition (January 2023)

- 4th Japanese Edition (December 2023)

-

International standardization

- Activity on ISO/IEC TR 5469:2024

✪ Research on the quality evaluation and management for AI systems

We develop methods to improve and evaluate the implementations of machine learning algorithms, models, and systems from a software engineering perspective.

-

Machine Learning Quality Management Project (NEDO funded project)

- Open testbed toolset Qunomon for the quality management of AI systems

- JST FOREST project

- JSPS project

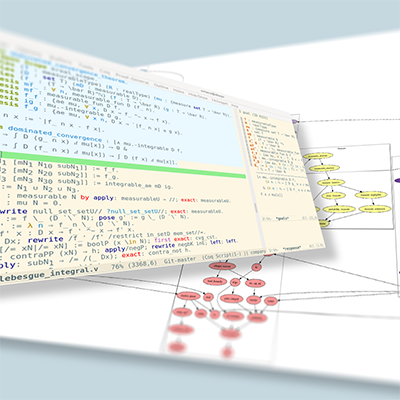

✪ Formal methods for software systems with uncertainty

Our third focus is to evaluate and certify the trustworthiness of software systems with uncertainty, such as cyber-physical systems. We develop formal methods for modeling and verifying software systems that deal with probabilistic events, physical environments, and so on. We also conduct foundational research on programming languages and interactive theorem provers.

Formal verification of software and mathematics: program verification, program generation, mathematics, information security, robotics (in collaboration with Inria, Nagoya University, and others)

Integration of formal methods and statistical methods: JST PRESTO, French-Japanese project LOGIS

Education in Nara Institute of Science and Technology (NAIST) Formal Verification Lab

- Recruiting:

- We are recruiting a young permanent researcher on this research topic.

- We are accepting applications for graduate students in Formal Verification Lab.

✪ Standardization and social implementations related to digital architecture

- Standardization activities and related research

- Social implementation through contributions to the open-source software community

Group Members

-

Group Leader

KAWAMOTO, Yusuke

yusuke.kawamoto[at]aist.go.jp -

Chief Senior Researcher

AFFELDT, Reynald

reynald.affeldt[at]]aist.go.jp -

Senior Researcher

TANAKA, Akira

tanaka-akira[at]aist.go.jp -

Senior Researcher

KITAMURA, Takashi

t.kitamura[at]aist.go.jp -

Researcher

BOHRER, Rose

rose.bohrer[at]aist.go.jp -

Researcher

SATO, Sota

sota.sato [at] aist.go.jp -

Guest Researcher

NAKAJIMA, Shin

nakajima-shin[at]aist.go.jp -

Guest Researcher

MARUYAMA, Fumihiro

maruyama.f[at]aist.go.jp -

Guest Researcher

KAWAO, Kazuya

kawai.kazuya[at]aist.go.jp -

Guest Researcher

Toshihiro, SUZUKI

toshi.suzuki [at] aist.go.jp -

Guest Researcher

EGAWA, Takashi

takashi.egawa[at]aist.go.jp -

Guest Researcher

NAMBA, Takaaki

nanba.takaaki[at]aist.go.jp -

Guest Researcher

OKAMOTO, Tamao

okamoto.tamao[at]aist.go.jp -

Guest Researcher

TAGUCHI, Kenji

kenji.taguchi [at] aist.go.jp -

Guest Researcher

FUKUZUMI, Shinichi

kame-fukuzumi [at] aist.go.jp -

Guest Researcher

BANA, Gergely -

Research Specialist

IWASE, Yuta -

Research Specialist

HAYASHITANI, Masahiro -

Research Specialist

MIYAKE, Kazumasa -

Technical Staff

IMAI, Yoshihiro -

Research Assistant

ISHIGURO, Yoshihiro -

Research Assistant

KOBAYASHI, Kentaro -

Research Assistant

KOBAYASHI, Akio -

IPRI Deputy Director

OIWA, Yutaka

y.oiwa [at] aist.go.jp -

IPRI Principal Research Manager

KONISHI, Koichi

k.konishi [at] aist.go.jp -

Standardization Officer (Intellectual Property and Standardization Promotion Division)

Yoshiki, SEO

y.seo [at] aist.go.jp -

Chief Collaboration Officer (ITH Collaboration Promotion Office)

Ryoichi, SUGIMURA

sugimura.roy [at] aist.go.jp